Member-only story

How to Train LLMs to “Think” (o1 & DeepSeek-R1)

Advanced reasoning models explained

In September 2024, OpenAI released its o1 model, trained on large-scale reinforcement learning, giving it “advanced reasoning” capabilities. Unfortunately, the details of how they pulled this off were never shared publicly. Today, however, DeepSeek (an AI research lab) has replicated this reasoning behavior and published the full technical details of their approach. In this article, I will discuss the key ideas behind this innovation and describe how they work under the hood.

OpenAI’s o1 model marked a new paradigm for training large language models (LLMs). It introduced so-called “thinking” tokens, which enable a sort of scratch pad that the model can use to think through problems and user queries.

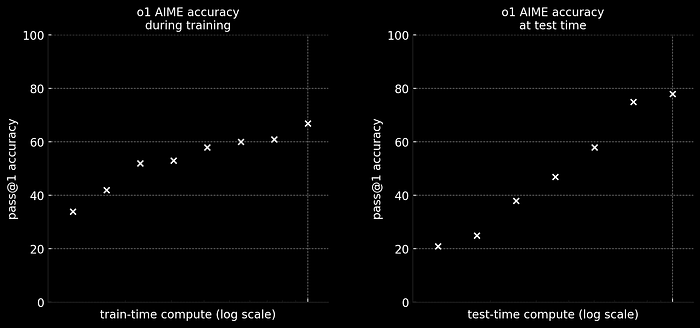

The major insight from o1 was performance improved with increased test-time compute. This is just a fancy way of saying that the more tokens a model generates, the better its response. The figure below, reproduced from OpenAI’s blog, captures this point nicely.

In the plots above, the y-axes are model performance on AIME (math problems), while the x-axes are various compute times. The left plot depicts the well-known neural scaling laws that kicked off the LLM rush of 2023. In other words, the longer a model is trained (i.e. train-time compute), the better its performance.

On the right, however, we see a new type of scaling law. Here, the more tokens a model generates (i.e. test-time compute), the better its performance.

“Thinking” Tokens

A key feature of o1 is its so-called “thinking” tokens. These are special tokens introduced during post-training, which delimit the model’s chain of thought (CoT) reasoning (i.e. thinking through the problem). These special tokens are important for two reasons.